PoplarML

Deploy production-ready ML models at scale with minimal engineering complexity

About PoplarML

Challenges It Solves

- Complex, resource-intensive ML deployment processes delay time-to-production

- Lack of standardized model serving infrastructure creates operational bottlenecks

- Difficulty managing model versions, monitoring, and governance at scale

- High engineering overhead diverts resources from core ML innovation

Proven Results

Key Features

Core capabilities at a glance

Automated Model Deployment

Production-ready models in minutes, not months

Deploy trained models with single-click simplicity

Scalable Infrastructure

Handle millions of predictions without manual scaling

Auto-scaling endpoints manage variable workloads efficiently

Model Versioning & Management

Track, compare, and rollback models with precision

Complete model lineage and version control built-in

Real-time Monitoring & Analytics

Detect performance drift and anomalies instantly

24/7 monitoring with actionable performance insights

Enterprise Governance

Compliance and audit trails for regulated environments

Full audit logs and access controls for enterprise needs

API-First Architecture

Seamless integration with existing systems

REST and gRPC APIs enable rapid integration

Ready to implement PoplarML for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

Kubernetes

Native Kubernetes integration for containerized model deployment and orchestration

Docker

Container-based deployment enabling consistent model environments across platforms

TensorFlow

Direct support for TensorFlow models with optimized serving endpoints

PyTorch

Seamless PyTorch model deployment with native runtime support

Apache Spark

Integration for distributed data processing and batch prediction workloads

AWS

Cloud-native deployment to AWS infrastructure with auto-scaling capabilities

Datadog

Performance monitoring integration for model metrics and infrastructure health

Jenkins

CI/CD pipeline integration for automated model testing and deployment

Implementation with AiDOOS

Outcome-based delivery with expert support

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | PoplarML | Falkonry LRS | Activechat.ai | VOGO Voice Platform |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

Falkonry LRS

Falkonry LRS: Accelerate Predictive Operations with Unified Machine Learning Falkonry LRS is an adv…

Explore

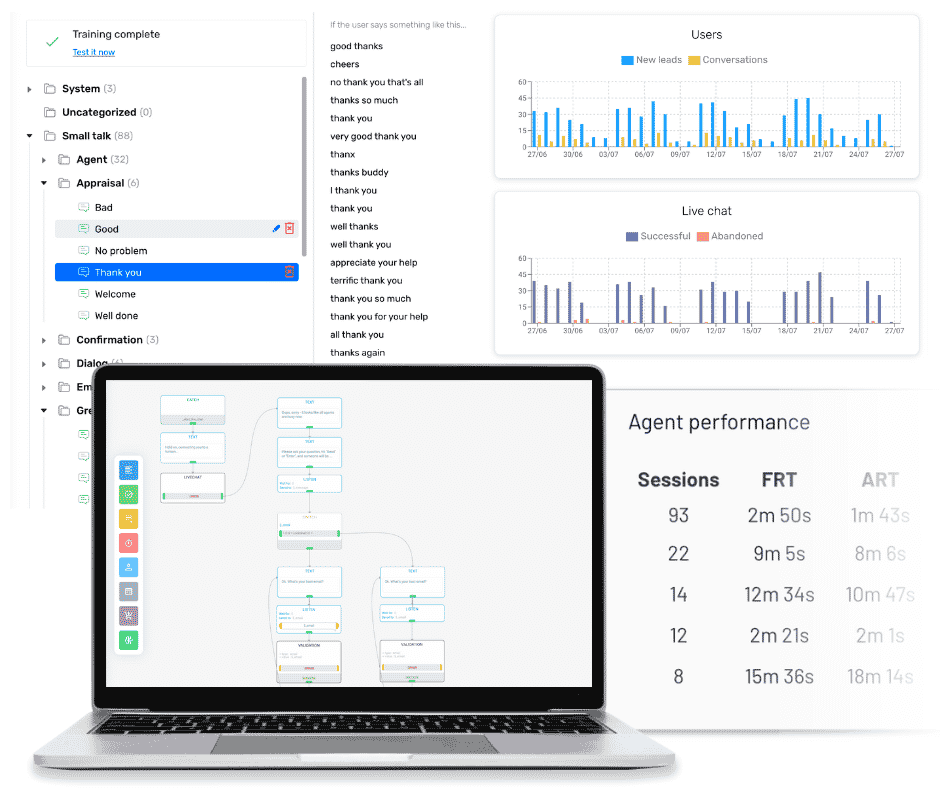

Activechat.ai

Activechat: Transform Customer Service with Intelligent Automation Activechat is a cutting-edge 360…

Explore

VOGO Voice Platform

Deliver Seamless Voice Experiences Across Alexa and Google Assistant with VOGO VOGO empowers busine…

Explore