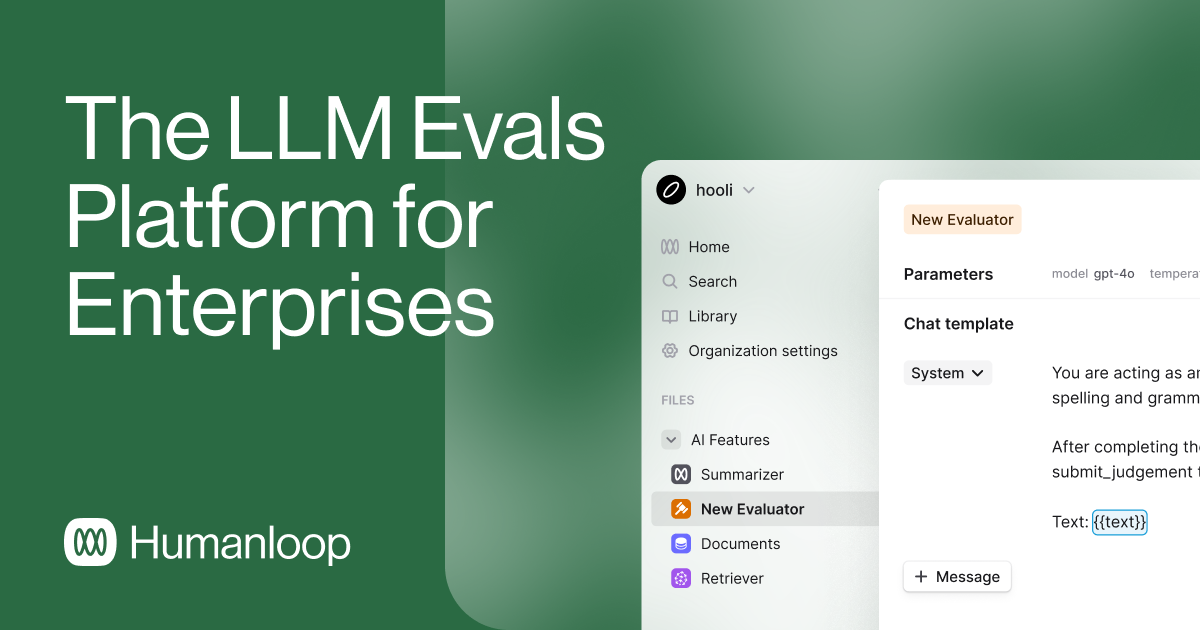

Humanloop

Enterprise-grade LLM evaluation platform for building reliable AI products at scale

About Humanloop

Challenges It Solves

- Difficulty systematically evaluating LLM outputs at scale with consistent quality metrics

- Lack of centralized prompt versioning and management across distributed teams

- Uncertainty about LLM reliability and performance before production deployment

- Challenges collecting and incorporating human feedback into model optimization loops

- Inability to monitor and measure LLM quality degradation in production

Proven Results

Key Features

Core capabilities at a glance

Comprehensive Prompt Management

Centrally version, organize, and deploy prompts

Eliminates prompt sprawl and ensures version control

Advanced A/B Testing

Compare model variants and prompt iterations systematically

Data-driven decisions on model and prompt selection

Human Feedback Integration

Collect and incorporate human evaluations into optimization

Continuously improve LLM quality with real-world feedback

Production Monitoring

Track LLM performance and quality metrics in real-time

Proactive detection and remediation of quality issues

Evaluation Frameworks

Build custom metrics and automated evaluation pipelines

Standardized, repeatable evaluation across all models

API-First Architecture

Programmatic access to all evaluation and management functions

Seamless integration into existing AI workflows

Ready to implement Humanloop for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

OpenAI GPT Models

Native integration with GPT-3.5 and GPT-4 for prompt management and evaluation

Anthropic Claude

Comprehensive support for Claude models with full evaluation capabilities

Google PaLM

Integration with Google's large language models for testing and optimization

Slack

Workflow integration for team notifications and approval processes

GitHub

Version control integration for prompt and configuration management

Datadog

Monitoring integration for LLM performance tracking and alerting

Webhooks

Custom integrations via webhook support for internal systems

Implementation with AiDOOS

Outcome-based delivery with expert support

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | Humanloop | Craiyon | Verint Messaging | WPCode |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

Craiyon

Unlock Creative Potential with Craiyon: AI-Powered Image Generation for Personal and Commercial Use…

Explore

Verint Messaging

Verint Messaging™ on AIDOOS: Scalable, Omnichannel Messaging for Modern Customer Engagement Verint …

Explore

WPCode

WPCode: Future-Proof Your WordPress Customizations with Powerful Code Snippets Join over 2,000,000 …

Explore