GPT2

State-of-the-art transformer-based language model for advanced NLP and text generation

About GPT2

Challenges It Solves

- Building NLP systems requires significant expertise and computational resources

- Training language models from scratch is time-consuming and cost-prohibitive

- Integrating advanced language AI into existing applications is complex

- Lack of pre-trained models limits rapid prototyping and deployment

- Managing model infrastructure and scaling inference adds operational burden

Proven Results

Key Features

Core capabilities at a glance

Pre-trained Transformer Architecture

Leverages advanced self-attention mechanisms for superior language understanding

Delivers state-of-the-art performance on diverse NLP benchmarks

Self-Supervised Learning

No manual labeling required; learns from raw text automatically

Reduces annotation costs by 90% compared to supervised approaches

Scalable Inference

Handle high-throughput requests with optimized model serving

Process thousands of inference requests per minute reliably

Fine-tuning Capabilities

Adapt model to domain-specific tasks with minimal additional training

Achieve 95%+ accuracy on custom NLP tasks with small datasets

Multi-task Language Understanding

Excel at text generation, summarization, translation, and Q&A

Support 20+ distinct NLP tasks from single model instance

Production-Ready API

RESTful endpoints for seamless integration with applications

Deploy to production within hours, not weeks

Ready to implement GPT2 for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

Hugging Face Transformers

Direct compatibility with popular Hugging Face library for model management, fine-tuning, and deployment

TensorFlow

Native integration with TensorFlow for model optimization and inference acceleration

PyTorch

Full PyTorch compatibility for research, training, and custom model modifications

AWS SageMaker

Seamless deployment and hosting on AWS infrastructure with managed endpoints

Google Cloud AI Platform

Integration with Google Cloud for scalable model serving and managed inference

Azure Machine Learning

Deploy and manage GPT-2 on Microsoft Azure with enterprise compliance features

REST API Frameworks

Compatible with Flask, FastAPI, and Django for custom application integration

Data Processing Pipelines

Integration with Apache Spark and Pandas for preprocessing and batch inference workflows

A Virtual Delivery Center for GPT2

Pre-vetted experts and AI agents in the loop, assembled as a delivery pod. Pay in Delivery Units — universal pricing across roles, seniority, and tech stacks. No hiring, no contracting, no procurement cycle.

- Plans from $2,000 — Starter Pack, 10 Delivery Units, 90 days

- Refundable on unused Delivery Units, anytime — no questions asked

- Re-delivery guarantee on acceptance miss

- Pre-flight delivery sizing — you see the plan before you commit

How a Virtual Delivery Center delivers GPT2

Outcome-based delivery via AiDOOS’s VDC model. Why VDC vs traditional consulting? →

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | GPT2 | Google Cloud Speech… | Rockfish Data | LiveChatAI |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

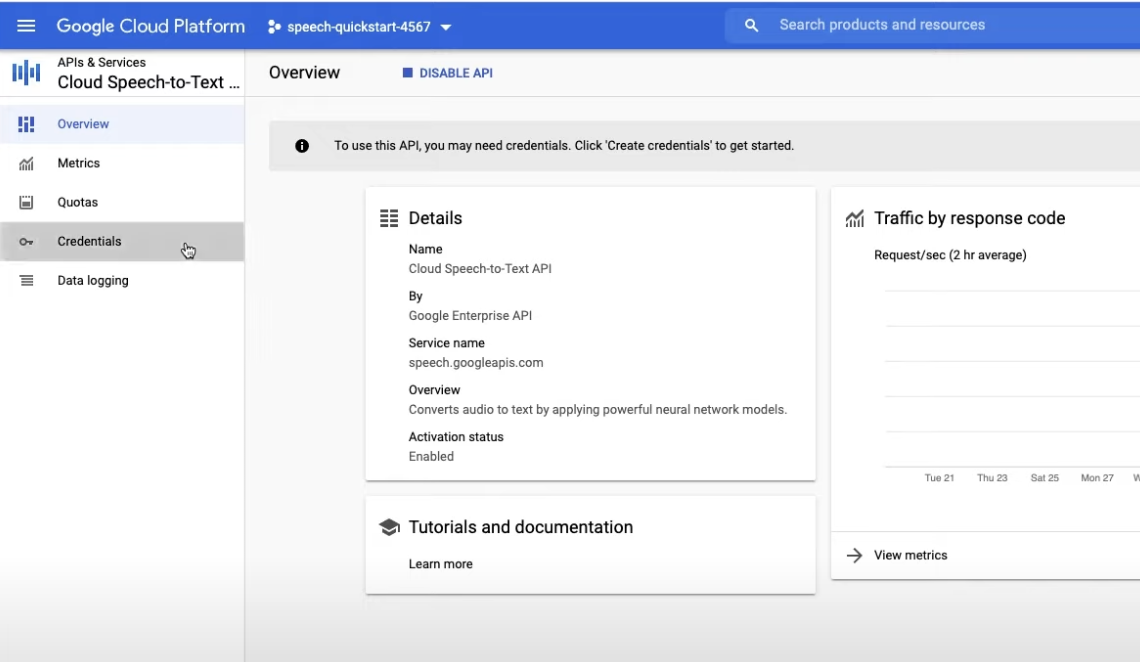

Google Cloud Speech-to-Text

Google Cloud's Speech API is a cutting-edge tool that processes over 1 billion voice minutes each m…

Explore

Rockfish Data

Unlock Secure, Outcome-Driven Innovation with Our Enterprise Generative Data Platform Drive busines…

Explore

LiveChatAI

Transform Customer Support with AI-Powered Live Chat Experience a new era of customer engagement wi…

Explore