GGML

Bring advanced machine learning to everyday hardware with optimized tensor operations.

About GGML

Challenges It Solves

- High computational costs limiting ML model deployment on standard hardware

- Dependency on expensive specialized infrastructure for advanced model inference

- Performance bottlenecks preventing real-time ML processing on edge devices

- Complexity in optimizing tensor operations across diverse hardware platforms

- Lack of efficient solutions for on-premise ML deployment

Proven Results

Key Features

Core capabilities at a glance

Multi-threaded Tensor Operations

Parallel processing for accelerated computations

Up to 4-8x performance improvement on multi-core systems

SIMD Optimizations

Vector instruction-level performance enhancements

Significant speedup on modern CPU architectures (AVX, SSE, NEON)

Quantization Support

Reduced model size and memory footprint

80-90% reduction in model size with minimal accuracy loss

Lightweight Architecture

Minimal dependencies and small binary footprint

Easy deployment across diverse environments and devices

Cross-Platform Compatibility

Support for CPU, GPU, and specialized accelerators

Seamless execution across x86, ARM, and mobile platforms

Memory Efficiency

Optimized memory management and allocation

Run large models on devices with limited RAM

Ready to implement GGML for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

Hugging Face Transformers

Direct integration with popular pre-trained models and model hub for seamless model deployment

LLaMA Models

Optimized support for LLaMA language models enabling efficient inference at scale

ONNX Runtime

ONNX model format support for cross-framework model compatibility

Docker Containers

Containerization support for simplified deployment and environment consistency

Kubernetes Orchestration

Integration with Kubernetes for scalable distributed inference workloads

Python Bindings

Native Python API for easy integration into existing ML workflows

REST API Frameworks

Compatible with FastAPI and Flask for building inference services

Monitoring Tools

Integration with Prometheus and other monitoring solutions for performance tracking

A Virtual Delivery Center for GGML

Pre-vetted experts and AI agents in the loop, assembled as a delivery pod. Pay in Delivery Units — universal pricing across roles, seniority, and tech stacks. No hiring, no contracting, no procurement cycle.

- Plans from $2,000 — Starter Pack, 10 Delivery Units, 90 days

- Refundable on unused Delivery Units, anytime — no questions asked

- Re-delivery guarantee on acceptance miss

- Pre-flight delivery sizing — you see the plan before you commit

How a Virtual Delivery Center delivers GGML

Outcome-based delivery via AiDOOS’s VDC model. Why VDC vs traditional consulting? →

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | GGML | GaliChat | Unleash | RIFFIT Reader |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

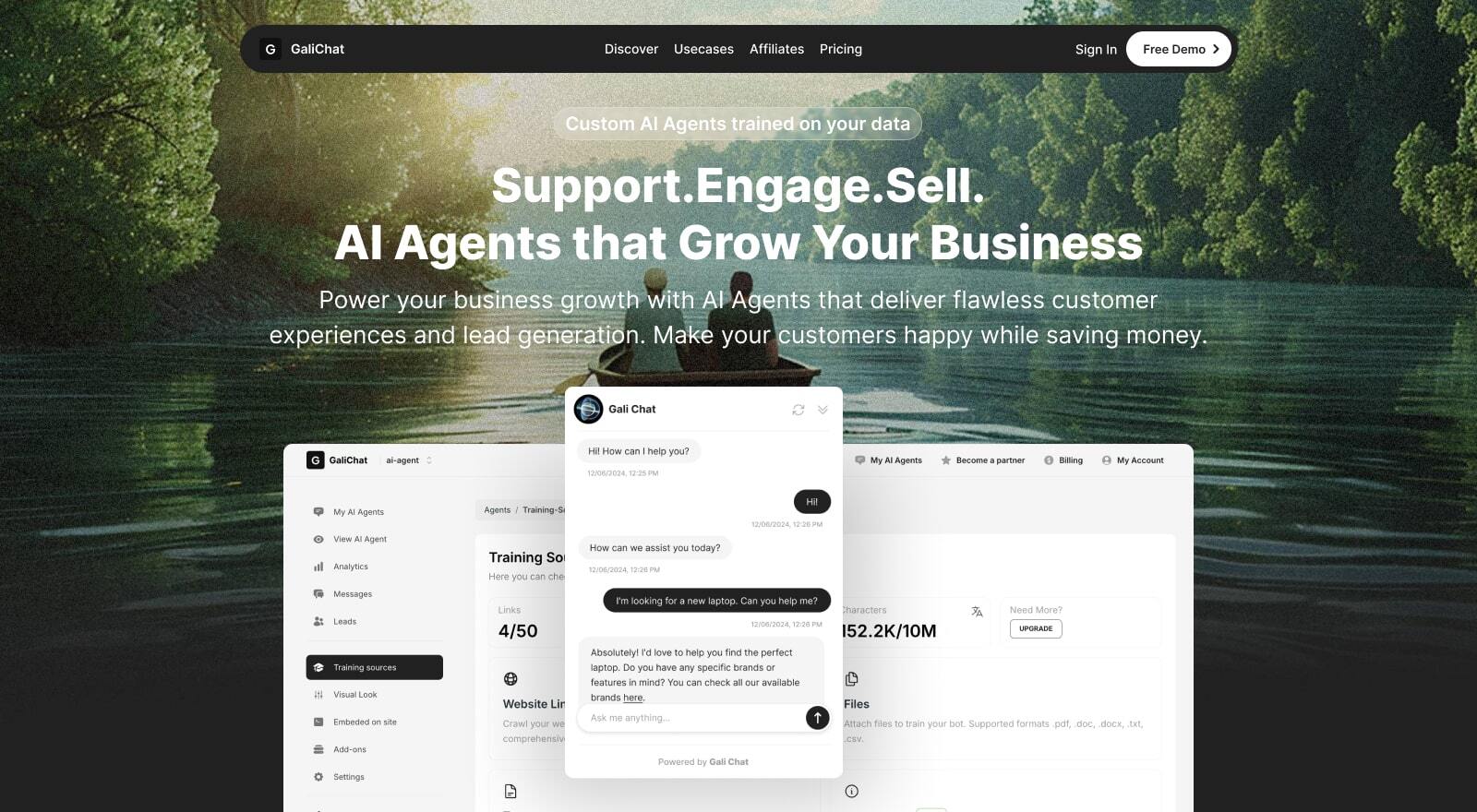

GaliChat

Transform Your Business with a 24/7 AI Support Assistant Unlock new growth opportunities and elevat…

Explore

Unleash

AI Enterprise Search Tool | Unleash with AiDOOS Integration Empower your teams with AI-powered ente…

Explore

RIFFIT Reader

Transform Reading into Music with RIFFIT Reader RIFFIT Reader revolutionizes the way you engage wit…

Explore