Galileo

End-to-end platform for building, evaluating, and monitoring generative AI applications with confidence

About Galileo

Challenges It Solves

- Difficulty validating and evaluating generative AI model outputs for quality and accuracy

- Lack of visibility into LLM application performance in production environments

- Time-consuming manual testing and refinement cycles delaying AI product launches

- Challenges ensuring consistent output quality across diverse use cases and scenarios

- Limited tools for monitoring and debugging failures in generative AI systems

Proven Results

Key Features

Core capabilities at a glance

Automated Evaluation Framework

Systematic assessment of LLM outputs

80% faster quality validation compared to manual review

Real-time Monitoring Dashboard

Complete visibility into application behavior

Immediate detection of performance degradation and anomalies

Generative Data Pipeline

Automated synthetic data and test case generation

Reduces manual data preparation time by 70%

Model Evaluation Metrics Library

Pre-configured evaluation criteria for common use cases

Deploy evaluation frameworks without custom coding

Production Observability Suite

Comprehensive logging and analytics for deployed models

Identify root causes of failures within minutes

Iterative Refinement Tools

Streamlined feedback loops for output improvement

Accelerate model optimization through structured experimentation

Ready to implement Galileo for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

OpenAI API

Direct integration with GPT models for seamless prompt testing and evaluation

Anthropic Claude

Native support for Claude LLM models with automated quality assessment

Hugging Face

Integration with Hugging Face model hub for evaluating open-source LLMs

LangChain

Compatible with LangChain framework for monitoring AI application chains

Prompt Management Tools

Version control and iteration tracking for prompt experiments

Data Platforms

Integration with data warehouses for evaluation dataset management

CI/CD Pipelines

Automated evaluation in development workflows and deployment gates

Slack/Teams

Notifications and alerts for critical monitoring events and test results

Implementation with AiDOOS

Outcome-based delivery with expert support

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | Galileo | Diffblue Cover | Writesonic | Amazon Transcribe |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

Diffblue Cover

Accelerate Java Unit Testing with Diffblue Cover Diffblue Cover is the leading fully-autonomous, AI…

Explore

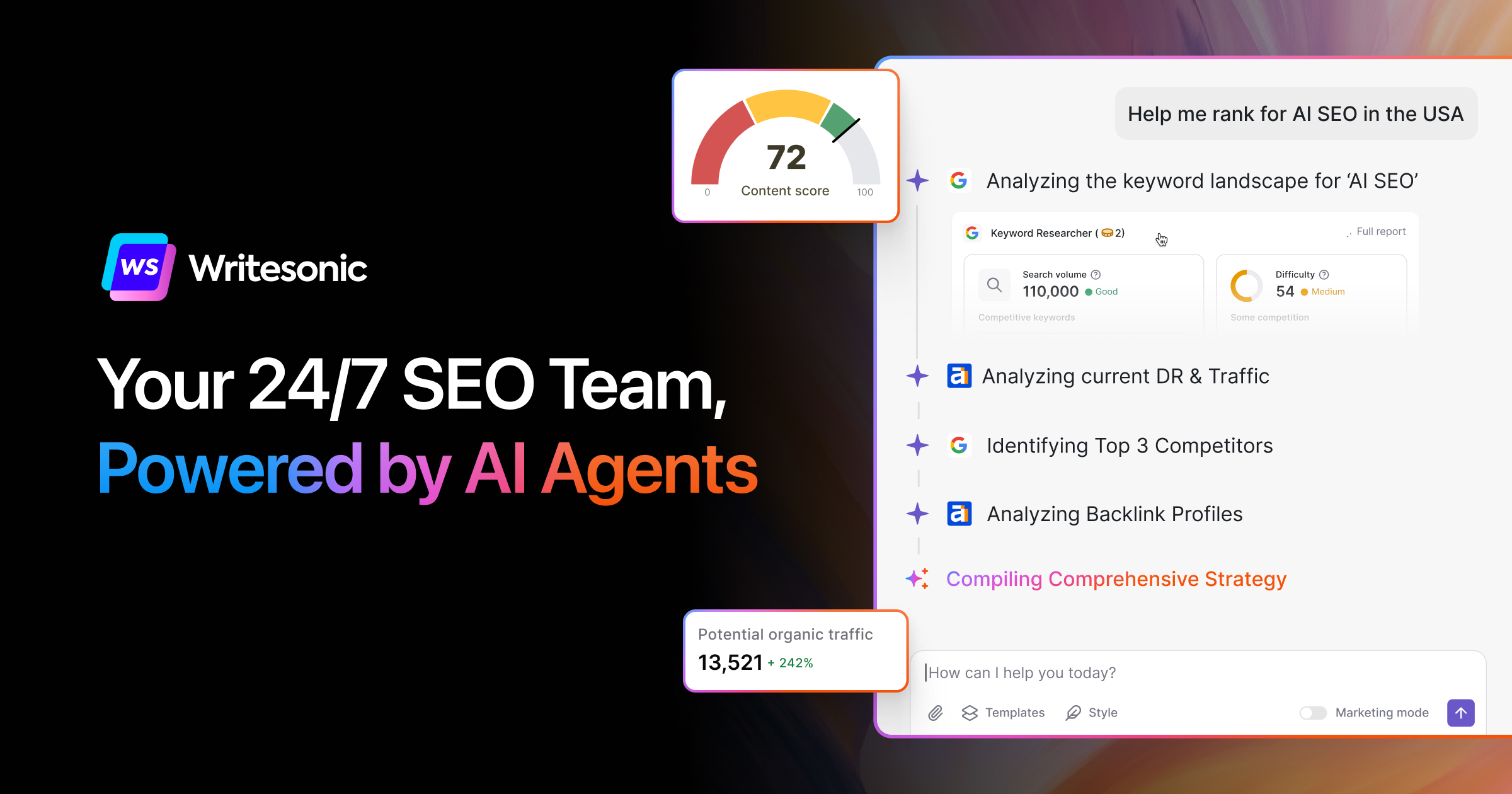

Writesonic

Writesonic: AI-Powered Content Creation for Unmatched Productivity Writesonic is a cutting-edge AI …

Explore

Amazon Transcribe

Amazon Transcribe is the perfect solution for developers looking to incorporate speech to text tech…

Explore