DiffSharp

Precision automatic differentiation for accelerated machine learning and scientific computing

About DiffSharp

Challenges It Solves

- Computing accurate derivatives manually is error-prone and computationally expensive

- Numerical differentiation introduces approximation errors that compound in complex models

- Scaling automatic differentiation across distributed computing environments is difficult

- Integration of AD libraries into existing data pipelines requires significant custom development

- Maintaining consistency and precision in deep neural network gradient calculations is challenging

Proven Results

Key Features

Core capabilities at a glance

Dual-Mode Differentiation

Forward and reverse mode AD for optimal efficiency

Selects best computation mode automatically based on problem dimensionality

Functional Programming Paradigm

Pure, composable derivative operations

Eliminates side effects ensuring reproducible and verifiable computations

Exact Derivative Computation

No approximation errors in gradient calculations

Achieves mathematical precision eliminating numerical drift in optimization

Higher-Order Derivatives

Compute derivatives of derivatives efficiently

Enables Hessian computation and advanced numerical methods

GPU Acceleration Support

Leverage GPU compute for scalable differentiation

Accelerates batch processing and large-scale scientific computations

Ready to implement DiffSharp for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

F# / .NET Ecosystem

Native integration with F# functional programming language and .NET framework for seamless integration into enterprise environments

TensorFlow

Complement or replace TensorFlow's automatic differentiation for specific numerical computing tasks requiring higher precision

PyTorch

Interoperable with PyTorch workflows through data format compatibility and gradient interchange protocols

Jupyter Notebooks

Full support for interactive scientific computing and rapid prototyping of differential computations

Julia Scientific Computing

Integration with Julia language for high-performance numerical and scientific computing applications

Cloud Computing Platforms

Deployment on Azure, AWS, and GCP with AiDOOS governance and resource orchestration

Data Pipeline Frameworks

Integration with Apache Spark and Dask for distributed automatic differentiation in large-scale ML pipelines

Implementation with AiDOOS

Outcome-based delivery with expert support

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | DiffSharp | B2Metric | Exploratory | iSenseHUB |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

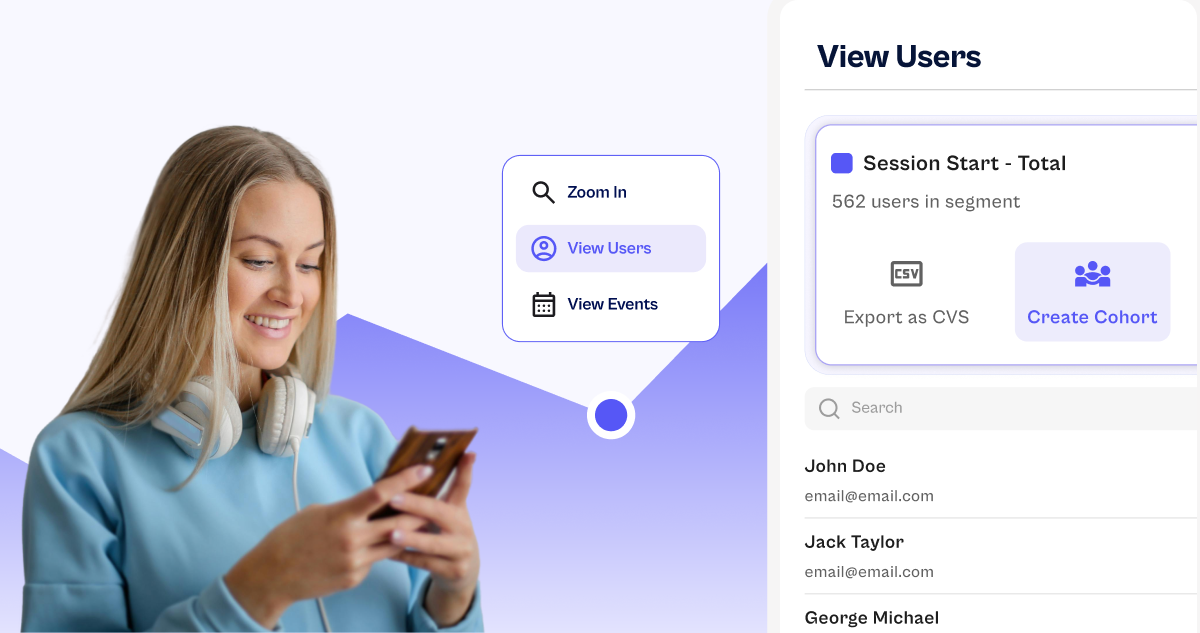

B2Metric

B2Metric: AI-Powered Insights for Smarter Marketing and Customer Engagement B2Metric is an advanced…

Explore

Exploratory

Transform Data into Actionable Insights with Exploratory Exploratory is a powerful, intuitive platf…

Explore

iSenseHUB

iSenseHUB: Transform Your Workflow with AI-Powered Solutions Unlock the full potential of artificia…

Explore