Deep Learning Containers

Enterprise-grade containerized deep learning infrastructure for accelerated AI model deployment

About Deep Learning Containers

Challenges It Solves

- Complex infrastructure setup and dependency management delays ML project timelines

- Inconsistent model performance across development, testing, and production environments

- GPU resource inefficiency and high infrastructure costs for deep learning workloads

- Lack of standardized containerization leading to reproducibility and scaling challenges

- Integration complexity between ML pipelines and existing enterprise systems

Proven Results

Key Features

Core capabilities at a glance

Pre-Optimized Framework Containers

GPU-accelerated environments ready for immediate use

Eliminate setup time, start training models instantly

Automated Scaling and Orchestration

Intelligent resource allocation across distributed infrastructure

Achieve 3x faster training with automatic load balancing

Model Versioning and Reproducibility

Complete audit trail for all model iterations and experiments

Ensure 100% reproducible results across training cycles

Multi-Framework Support

Native support for TensorFlow, PyTorch, and Keras

Eliminate framework compatibility issues and technical debt

Integrated Monitoring and Logging

Real-time performance metrics and resource utilization tracking

Reduce debugging time by 50% with comprehensive visibility

Ready to implement Deep Learning Containers for your organization?

Real-World Use Cases

See how organizations drive results

Integrations

Seamlessly connect with your tech ecosystem

Kubernetes

Native orchestration and management of containerized deep learning workloads across clusters

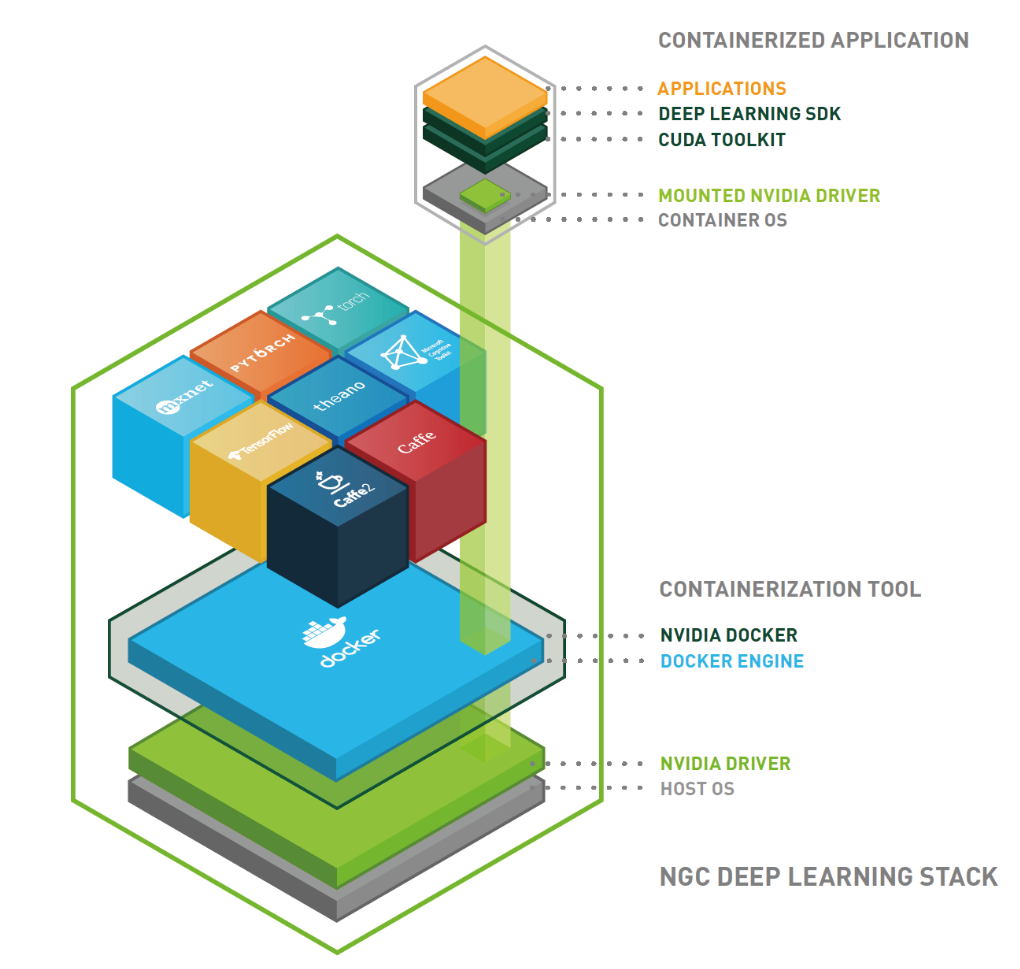

Docker

Containerization foundation with optimized images for deep learning frameworks

TensorFlow

Pre-configured environments with TensorFlow runtime, CUDA, and cuDNN optimization

PyTorch

Integrated PyTorch ecosystem with distributed training and GPU acceleration

AWS SageMaker

Seamless deployment and management of models in AWS cloud infrastructure

Azure ML

Integration with Azure Machine Learning pipelines and compute resources

MLflow

Model tracking, versioning, and reproducibility across experiment lifecycle

Implementation with AiDOOS

Outcome-based delivery with expert support

Outcome-Based

Pay for results, not hours

Milestone-Driven

Clear deliverables at each phase

Expert Network

Access to certified specialists

Implementation Timeline

See how it works for your team

Alternatives & Comparisons

Find the right fit for your needs

| Capability | Deep Learning Containers | TruEra Monitoring | Jotengine | Concerto AI |

|---|---|---|---|---|

| Customization | ||||

| Ease of Use | ||||

| Enterprise Features | ||||

| Pricing | ||||

| Integration Ecosystem | ||||

| Mobile Experience | ||||

| AI & Analytics | ||||

| Quick Setup |

Similar Products

Explore related solutions

TruEra Monitoring

Transform Machine Learning Operations with TruEra Monitoring TruEra Monitoring is a powerful soluti…

Explore

Jotengine

Jotengine transforms conversations and meetings into written transcripts and video captions, boosti…

Explore

Concerto AI

Transform Customer Engagement with AI-Powered Automated Conversations Revolutionize the way your bu…

Explore